Overview

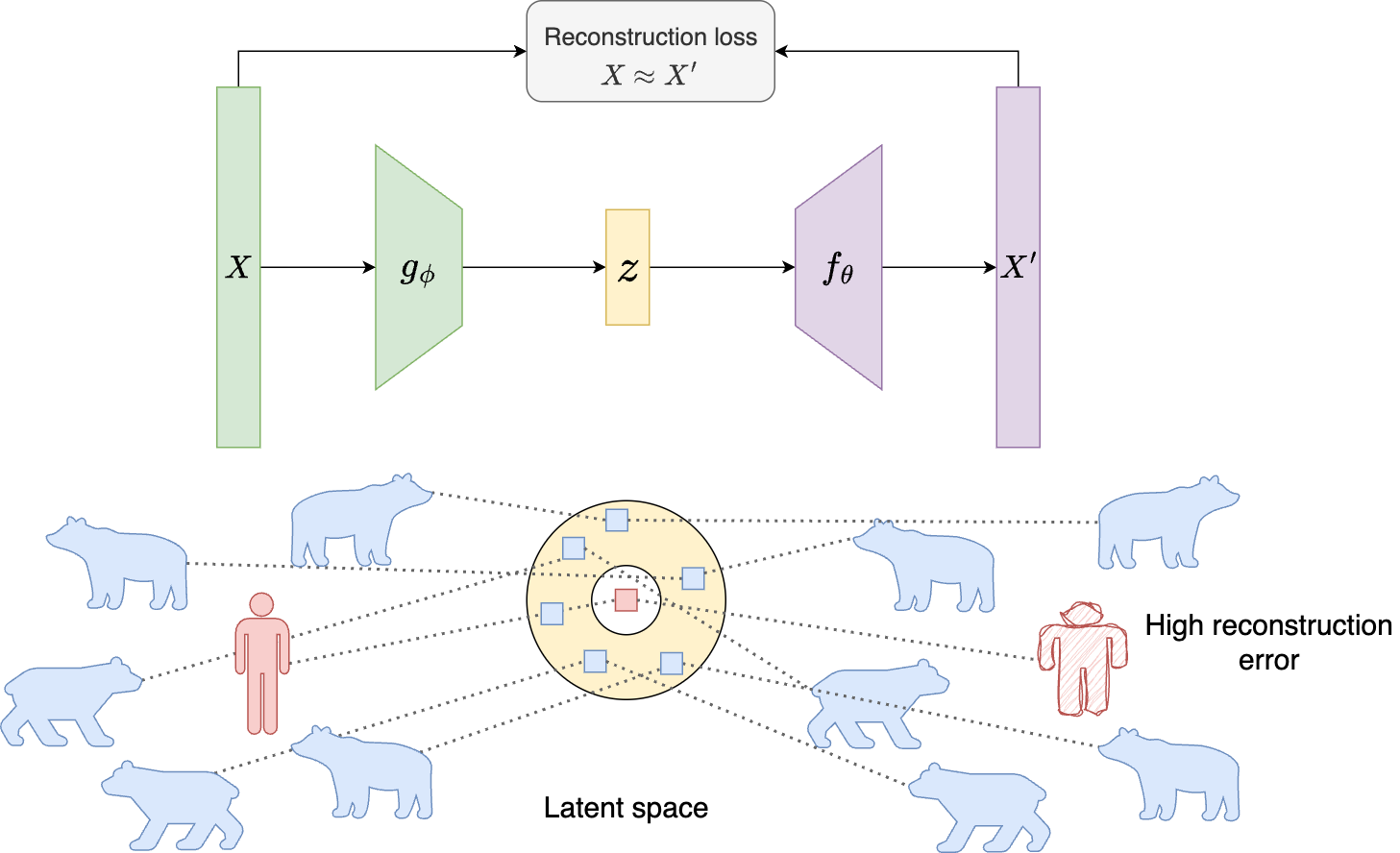

This report documents a reconstruction-based anomaly detector trained on regularly sampled InSAR time series. The core assumption is that anomalous samples are rare. The autoencoder therefore learns the dominant manifold of normal behavior and produces larger reconstruction errors for atypical points.

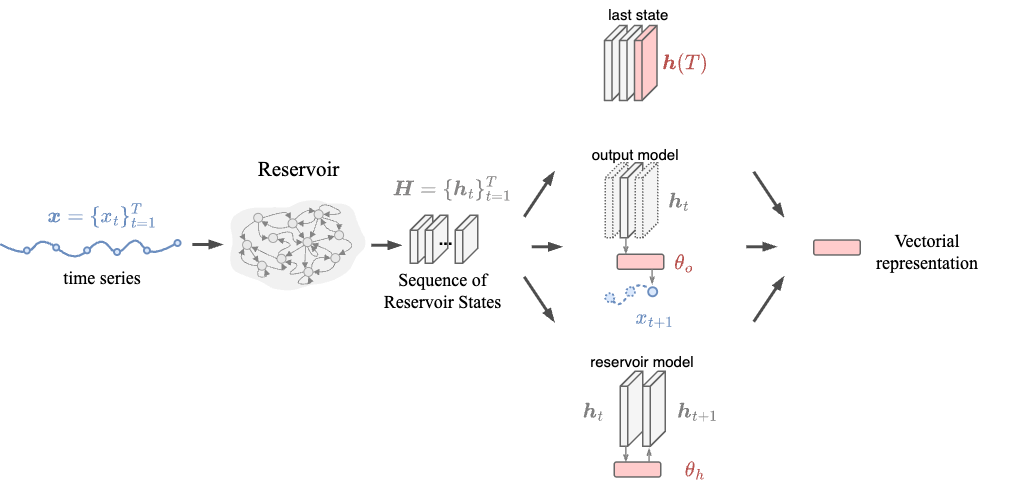

Time-Series Embedding with Reservoir Computing

As discussed in the RANSAC section, the time series are first regularized by filling missing timestamps with robust trend estimates. After regularization, each series is converted into a static embedding using Reservoir Computing.

Method Visual Overview

The vector representation can be, for example, the last reservoir state or readout weights trained on the sequence dynamics. For a detailed introduction to Reservoir Computing embeddings, see this reference.

Model Architecture and Training

We use a symmetric fully connected encoder-decoder with a low-dimensional bottleneck.

architecture:

name: Simple-AE

type: AutoEncoder

hparams:

encoder_dims: [32, 16, 8, 4]

decoder_dims: [8, 16, 32]

activation: "ReLU"

batch_norm: True

dropout: 0.1

The model minimizes mean squared reconstruction error (MSE). Inputs are reservoir embeddings without static features.

Results

| Dataset | Train MAE | Test MAE |

|---|---|---|

| Lyngen | 0.108 | 0.109 |

| Nordnes | 0.108 | 0.109 |

| Svalbard | 0.084 | 0.085 |

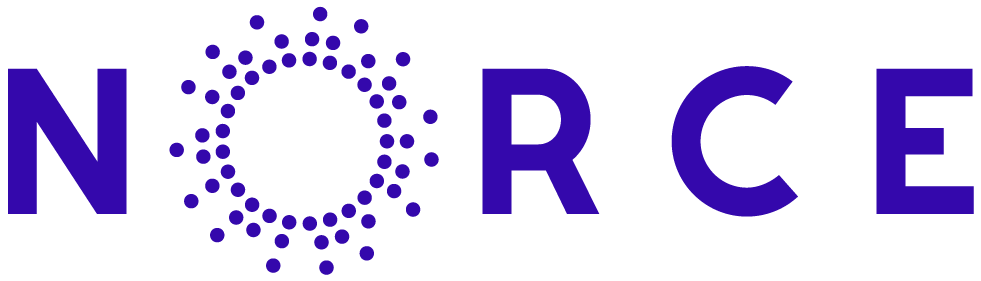

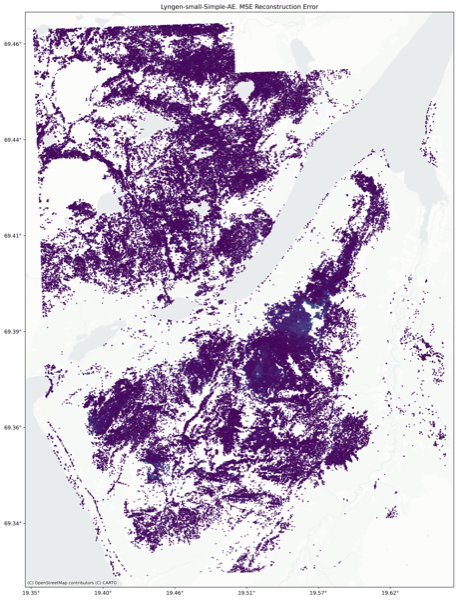

Lyngen

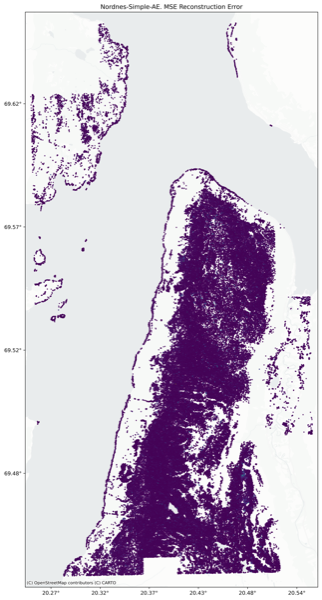

Nordnes

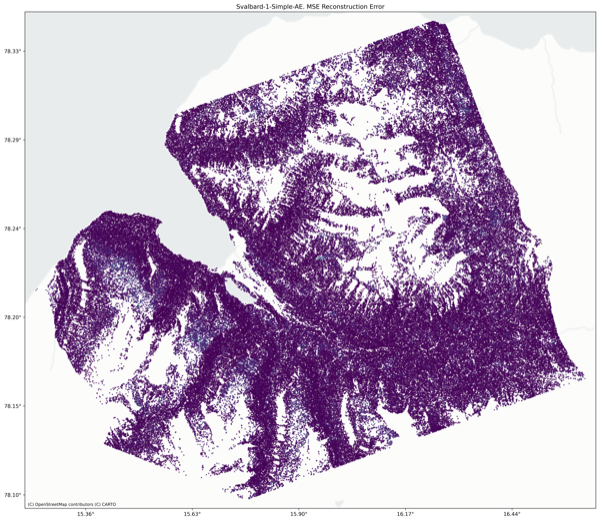

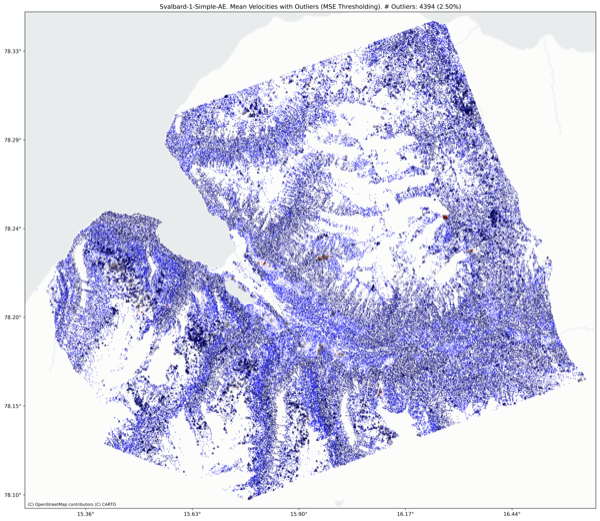

Svalbard

Limitations

- No explicit spatial or relational structure is modeled.

- The embedding stage is unsupervised and optimized independently from the downstream anomaly objective.